Miscellaneous

My research during my masters of music technology at georgia tech focused on a few different projects related to ambisonics, a really cool paradigm for full-sphere surround sound playback and manipulation.

I first became interested in ambisonics during my final year of undergrad, when I began to use it in sound installation contexts. I had the idea that sound carries with it the essence of a space and that through the manipulation of field recordings you can distort this space, creating new surreal spaces solely through audio. I had the idea that some sort of hybrid space could be created by seamlessly combining field recordings from different locations in an immersive surround sound environment. This led me to construct a basic 3-D speaker array, collect field recordings from around Atlanta, and create an interactive motion tracking and playback system using Supercollider and Max/MSP. The project ended up being a moderate success in practical terms but a great success in sparking my interest in ambisonics.

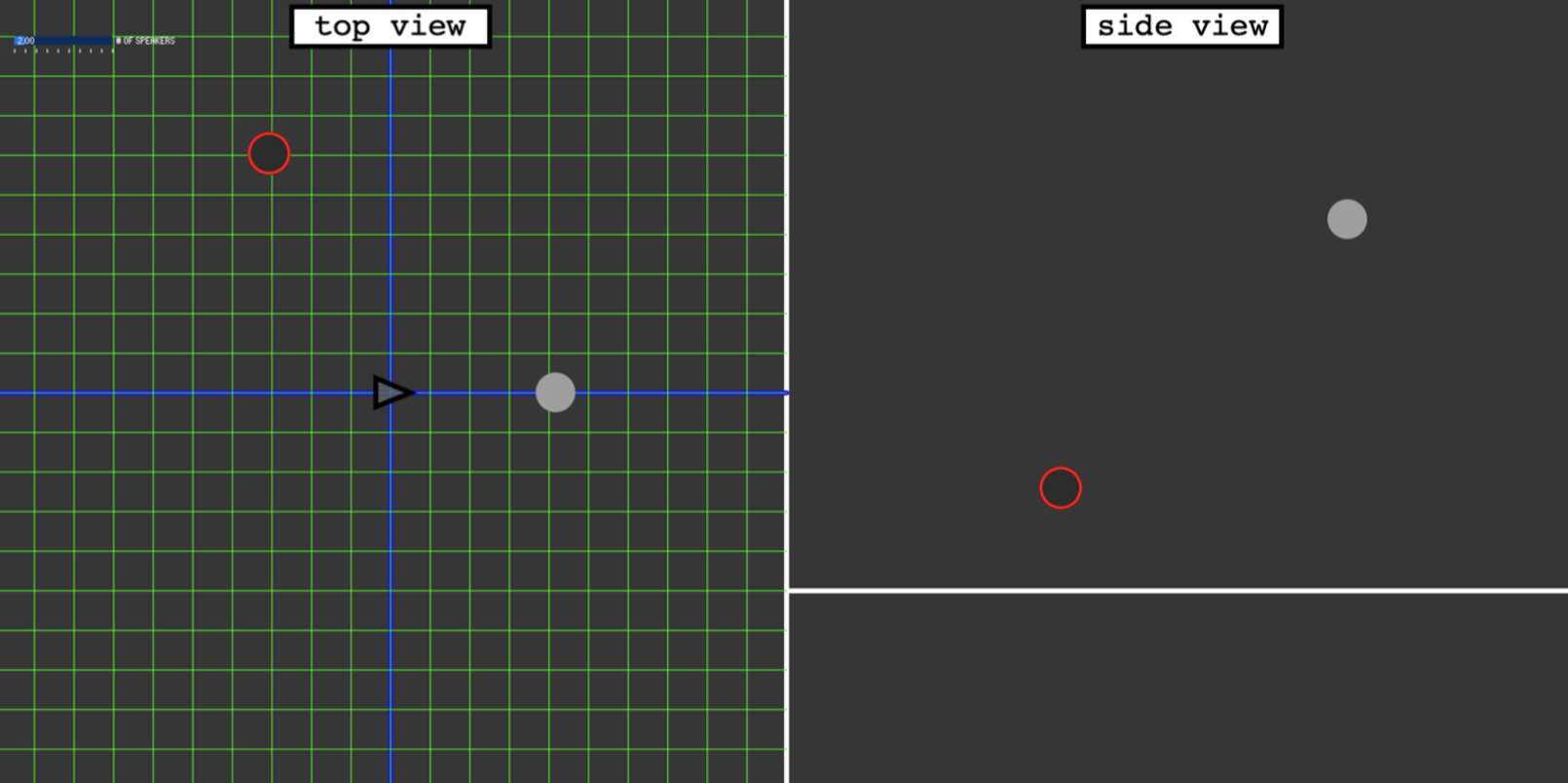

The next ambisonic-related project I tackled during my first semester of my masters was to develop a tool for prototyping speaker layouts. When creating an ambisonic array, the layout of the speakers can have an effect on how well spatialization work within it, and I wanted to see if there was a quick way to get an idea of how different arrays might perform using a digital simulation. I created a basic interfact for moving an avatar around in Processing and connected it to Supercollider for audio generation. Within this tool a user could place speakers, associate them with speaker feeds in an ambisonic decoder, and move around the virtual space as the audio is decoded to headphones using an HRTF decoder.

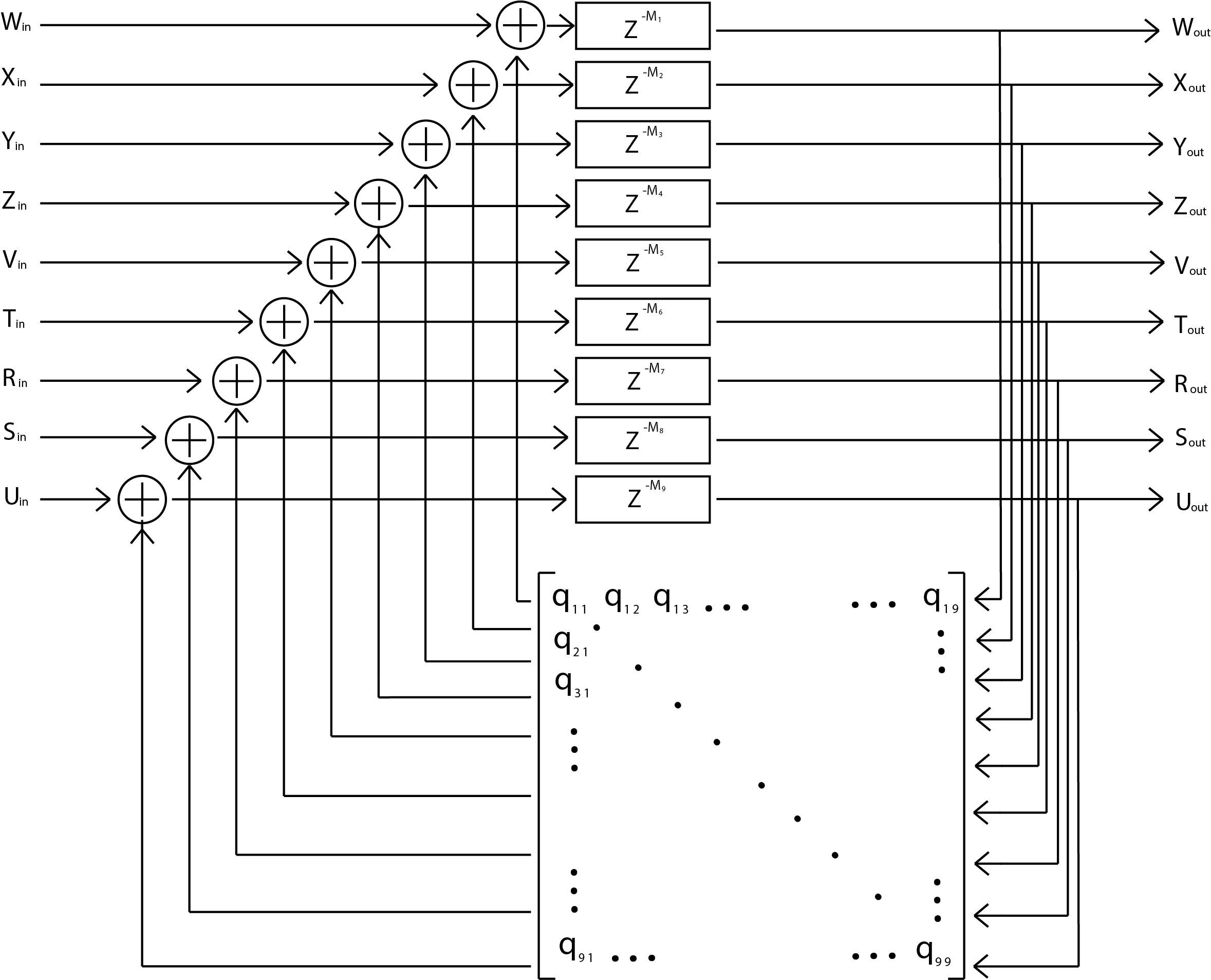

The following semester I began to think about why paradigms such as ambisonics are not more widely used. I concluded that one of the reasons was the lack of support for all the great digital tools that have been developed for stereo and mono formats, such as plugins and effects. This lead me down the rabbit hole of exploring the development of a reverb algorithm native to ambisonics. Most of the semester was spent learning about the history of different methods of artificial reverberation algorithms. Towards the end of the semester I finally began developing my reverberation, which was a feedback delay network based reverb for Second-Order Ambisonics. I aimed to extend of the work of Joseph Anderson, generalizing his algorithm to higher order ambisonics and improving upon its diffusive properties, but I have still not quite achieved a reverb I am happy with.

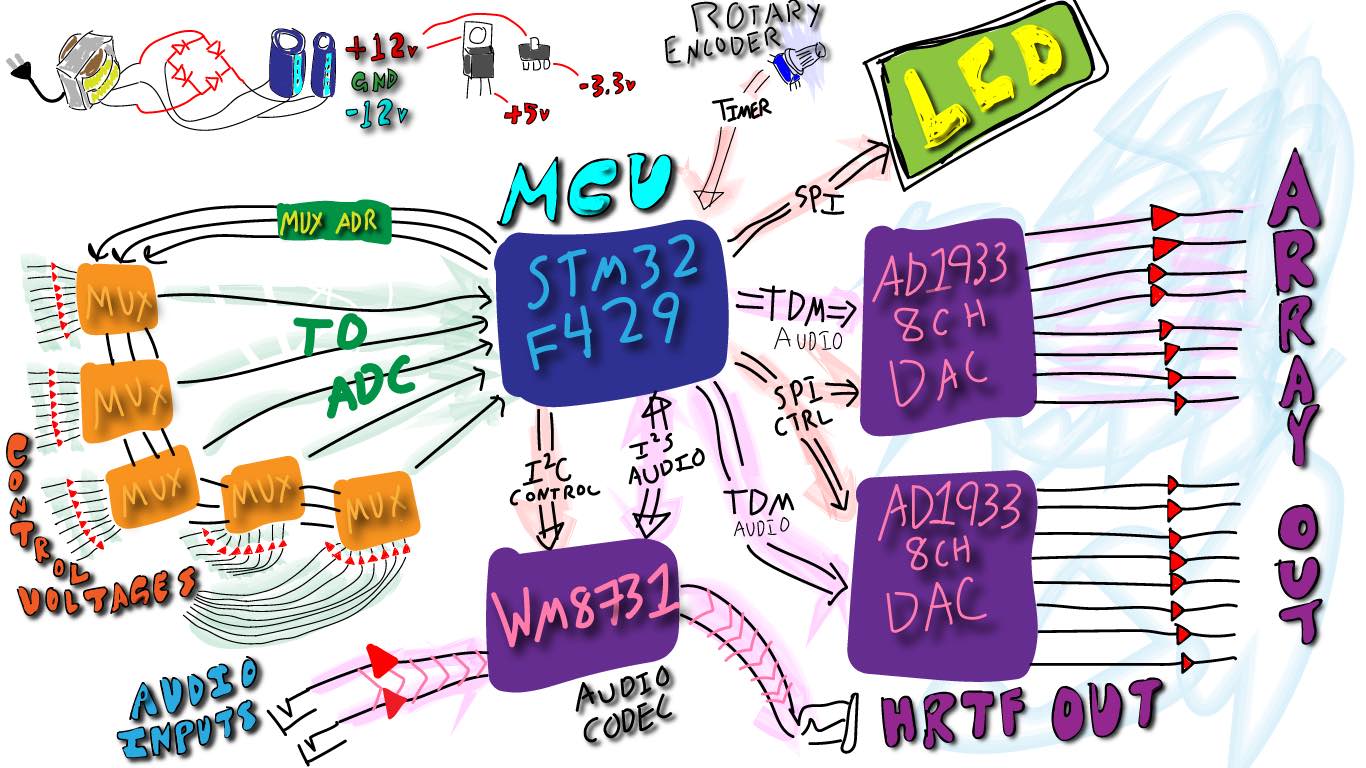

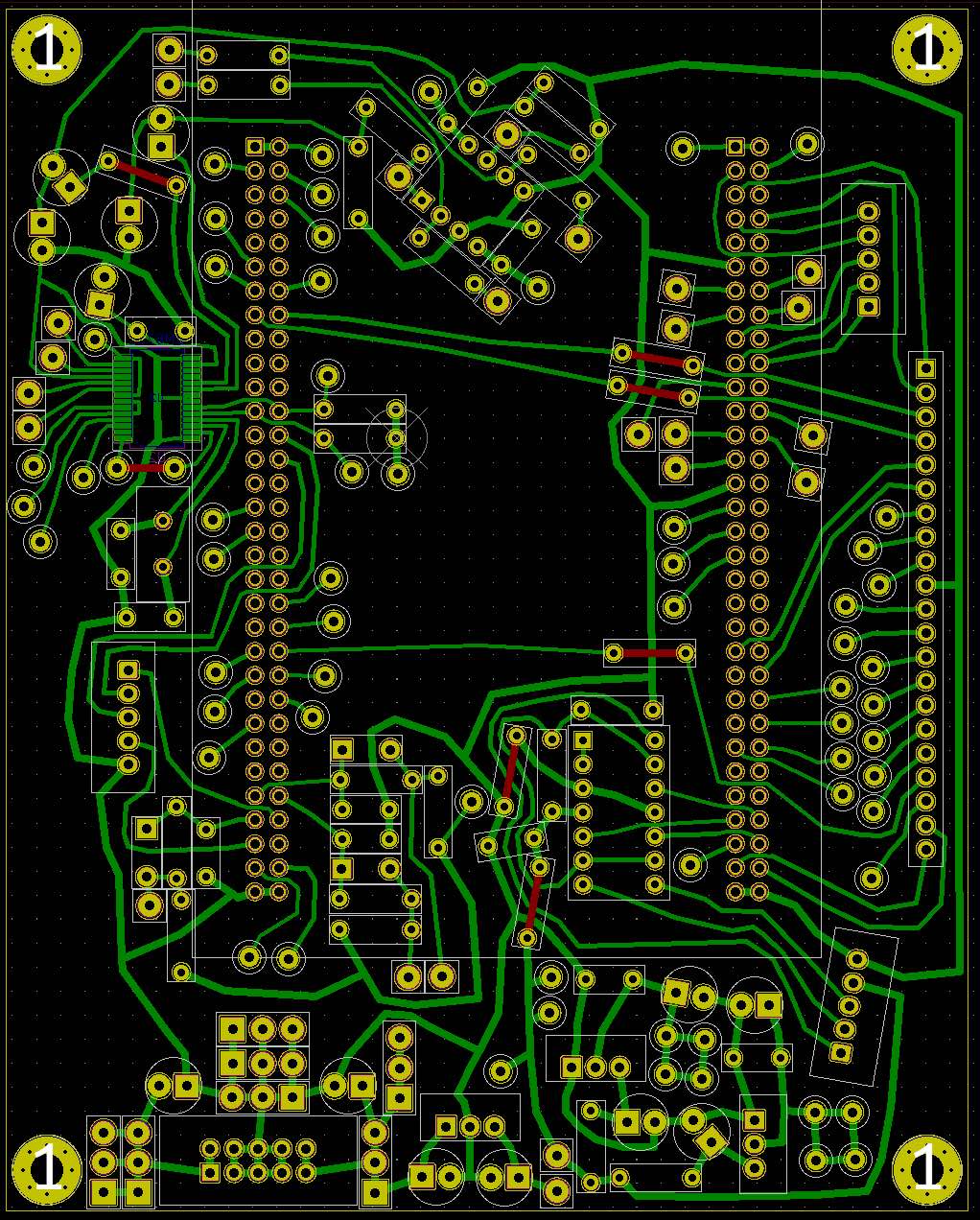

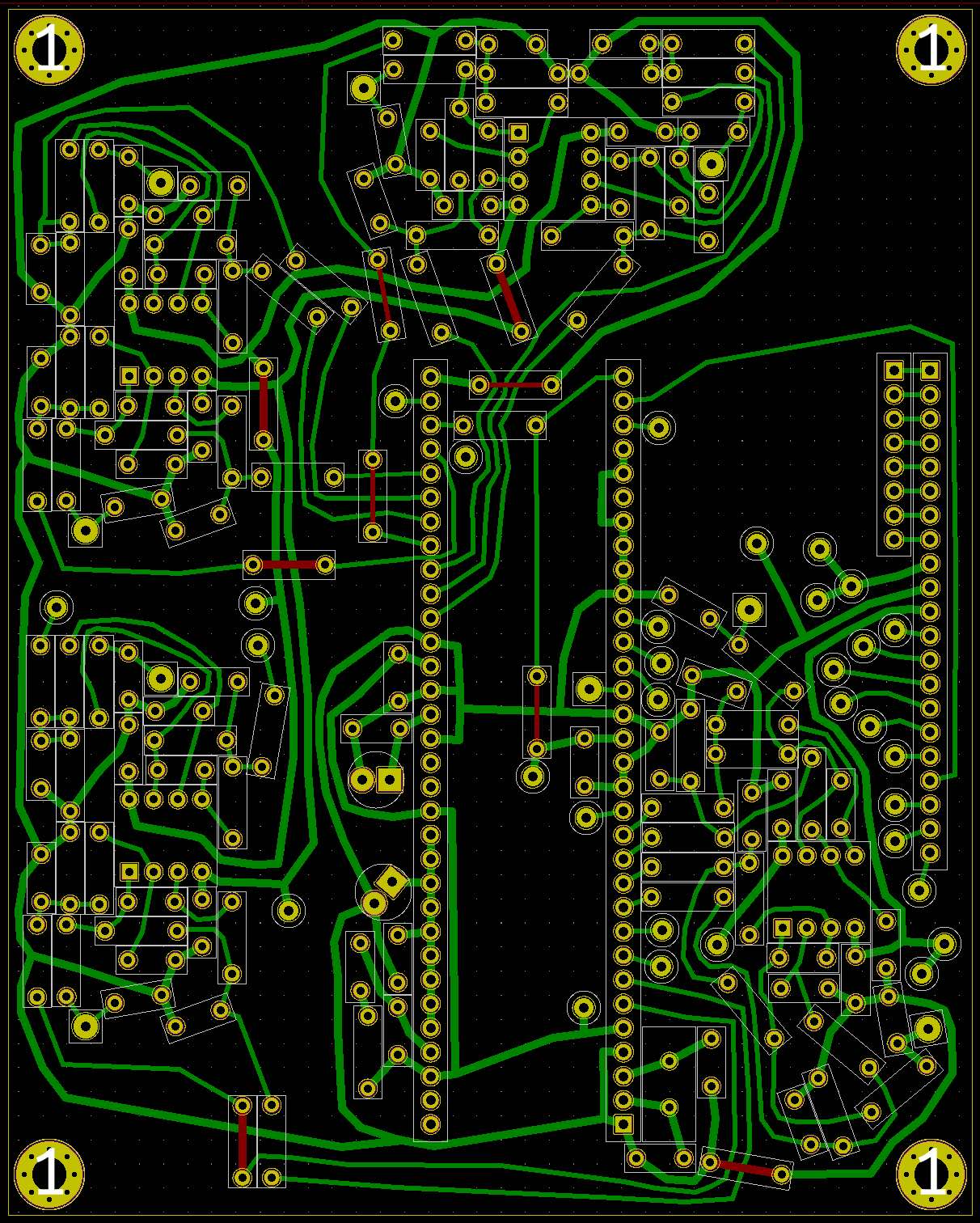

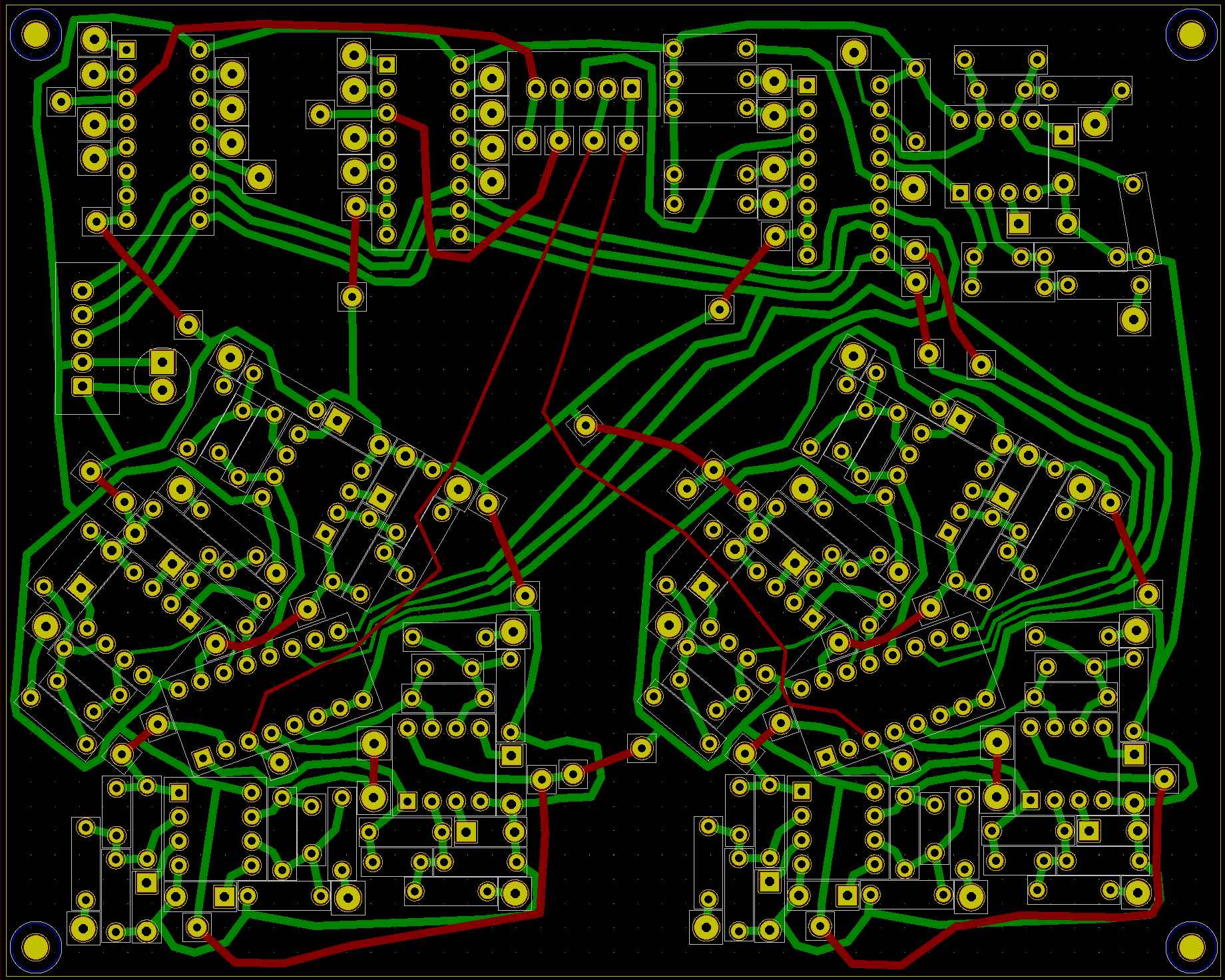

My final year of graduate school I embarked on the lofty project of moving ambisonics into a more analog domain. I was inspired by some fully analog ambisonic decoder schematics I found on a website called Henry's Interesting Electronics. as I hadn't considered the possibility of Ambisonics existing outside of a full computer. I have always found dedicated hardware interfaces to invite a lot more exploration than interfaces built into other technologies such as laptops and wanted something like that for ambisonics. I decided to attempt to develop a synthesizer module that takes care of all aspects of ambisonics from encoding, to transforming, to decoding. I thought that if a system like this existed it would invite exploration of spatialization in compositional and performance contexts. The tactility of the interface as well as the generic control voltages would make it easy to try out new ways of controlling sound spatialization as well facilitating the creation of new tactile interfaces that could easily be plugged into this system. I spent two semesters deep in the woods of hardware development and microcontroller programming before deciding the project needs to be rebuilt from the ground up. I greatly underestimated the processing power needed to implement ambisonic algorithms and was in a constant battle with the STM32F429 I was using, as well as my own limited programming skills. Either a more powerful microcontroller, a better optimized program, or something like a raspberry pi would be better suited to this project. I was able to get a version of the module working with a two-channel decoder but not yet with the 16 channels I had wanted. Though I feel like all of my projects in grad school ultimately failed, I learned an immeasurable amount about ambisonics, reverberation, and both hardware and software development.